Fuel campaign performance with laser-focused targeting

Another one of Apple’s Worldwide Developer Conferences (WWDC) has come and gone, and another update to SKAdNetwork (SKAN) arrives — this time with some updates that make it a far more usable solution for advertisers. If SKAN 1.0 was the Minimum Viable Product (MVP), you might now say we’re at beta release stage with SKAN 4.0 as it becomes more widely usable — a slightly different release approach to what we’re seeing with Google’s Privacy Sandbox for Android.

However, let’s dive into what was announced.

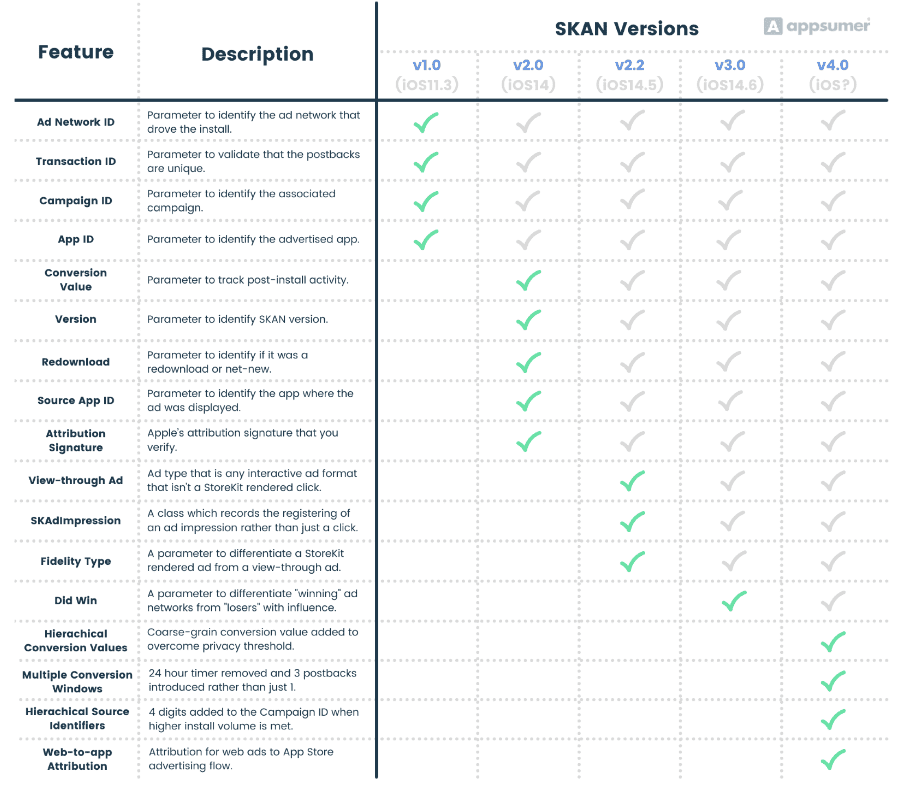

If you’re keeping score, here’s what we’ve seen through different SKAN releases up to now.

However, let’s take a more detailed look at these new features for SKAN 4.0.

The privacy threshold has likely had the biggest negative impact on advertisers’ ability to spend on iOS post-ATT. This is because the understanding of install value was lost for a high percentage of paid installs when a certain volume of installs was not met (we suspected this privacy threshold was around 20-30 installs per campaign and 128 installs per campaign on Facebook due to their Campaign ID mechanics).

Quite a big percentage of data was lost (~20% depending on campaign granularity), so this is a very welcome feature. It is a change to Conversion Values that delivers some indication of install value even when the install volume is lower.

There are now two types of Conversion Values returned:

In an important change, for some use cases, Conversion Values can now go up and down. Previously, they only went up.

Key considerations for advertisers:

A critical balance for advertisers when they only receive a single SKAN postback has been trying to capture enough information on the value of an install whilst not delaying data too long for analysis and not letting the 24 hour timer lapse through inactivity. Much like the solution Google announced, Multiple Conversion Windows means advertisers no longer have to strike this balance.

These key changes have been announced to Conversion Windows:

Key considerations for advertisers:

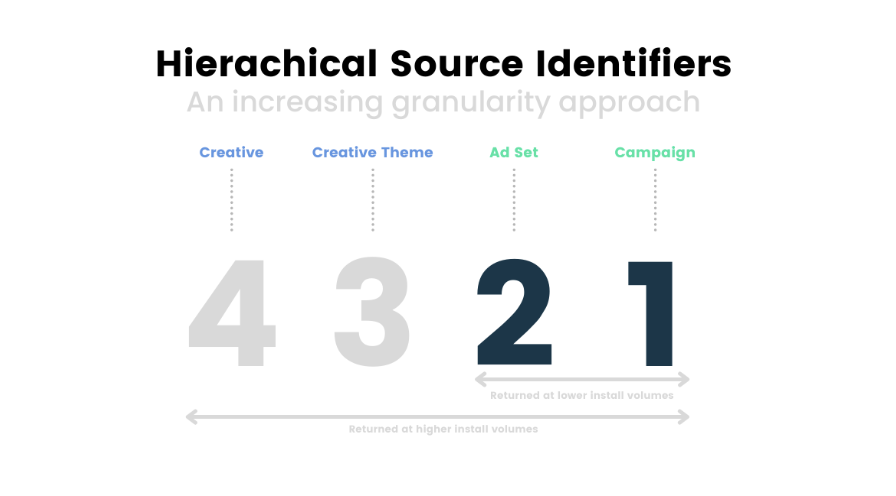

The 2-digit Campaign ID was a limiting factor for things like testing creatives and formats, particularly on Facebook where they reserved 1 digit for their own testing. So hierarchical source identifiers adding an extra 2 digits does open up more data richness for testing creatives and formats that will be welcome.

Hierarchical source identifiers essentially expand the Campaign ID field to four digits vs two. Albeit, with caveats:

Key consideration for advertisers:

Increase granularity with volume: To ensure you at least understand high-level campaign information even on low volume campaigns, it’s probably worth going from least granular to most granular in order of preference when structuring the Campaign ID. For example, see the below visualization.

Although this hasn’t been a key demand we’ve seen from app-first advertisers, if you’re web and app hybrid then SKAN’s inability to attribute web-to-app flows has likely been a big pain point. SKAN now covers web-to-app attribution when the web ad is directed to an app’s App Store product page. A long overdue improvement for many verticals.

Advertisers who have been falling back on fingerprinting can now consider that Apple has given them a final warning. A privacy session at WWDC was very clear that fingerprinting is not allowed. Whether we’ll see Apple policing the wild west of fingerprinting by rejecting app updates or by introducing technological solutions is still unclear. However, what is clear is that fingerprinting is not a grey area, it is without doubt against Apple’s policy.

Apple wants you to use SKAN and overall these are really welcome improvements for SKAN. If Apple are to clamp down on fingerprinting and SKAN becomes the dominant attribution solution for iOS, these changes make it more viable. In particular, still getting some idea of install value when the privacy threshold is not met will drive more iOS ad spend, as this has been a central challenge of SKAN.

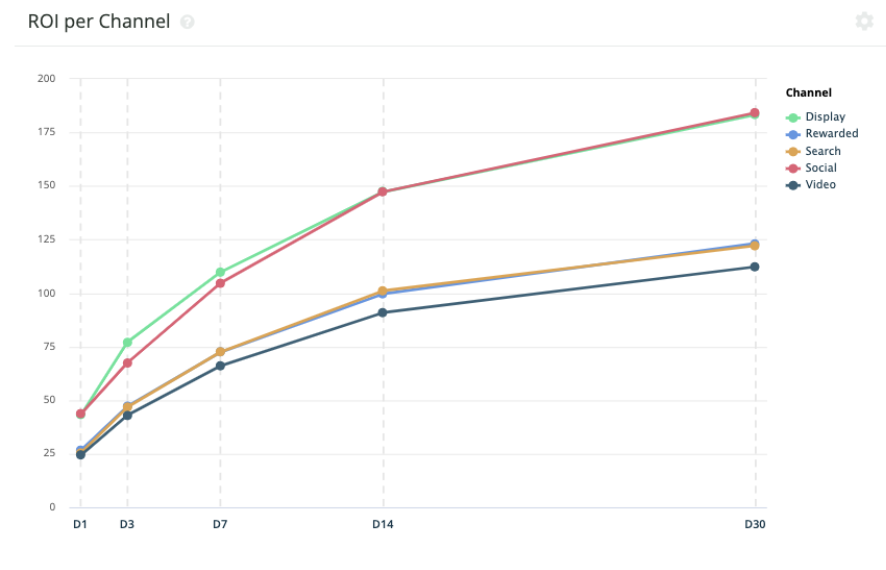

Now is the time to get behind SKAN and what these changes introduce is far more data complexity – just within the walls of SKAN – that advertisers will need to make sense of. There will be far more normalization and unification of even more SKAN data complexity needed. This requires an additional BI data layer on top of your MMP for all advertisers to manipulate, normalize and unify this data and turn it into an apples-to-apples comparison across channels and OS’s that delivers performance insights. Just a few areas of data manipulation that this update introduces are:

Register to our blog updates newsletter to receive the latest content in your inbox.